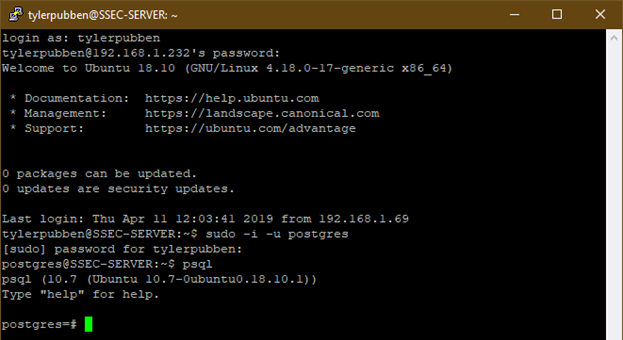

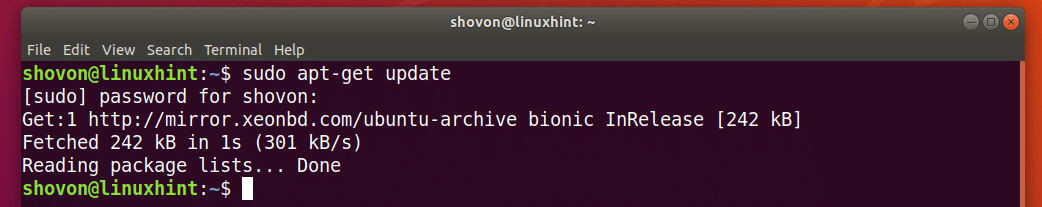

You can run with the full executable paths ( /usr/local/pgsql/bin/psql) or prepend the new directory at the beginning of your $PATH so that the system will look it up first:Įdit ~/. still point to the old version of the psql client. Now, keep in mind that commands psql, pg_dump etc. The new package should be installed with all its executables in here: /usr/local/pgsql/bin Note: The link below points to postgresql 10.4, you may want to check for newer subversions sudo yum install -y gcc readline-devel zlib-devel We're using VERSION="2018.03" of Amazon Linux AMI in our pipelines. Since none of the previous answers worked for me, I'm adding a solution that let me install the postgresql10 client. May still install step by step all dependencies and the server with: yum install -y When creating an RDS DB cluster or instance with postgres / aurora-postgresql DB engine and a specific EngineVersion, LocalStack will install and provision. Since postgresql10 is not yet available, adding extra yum repo is the only solution per today.Įrror: Package: Note! Amazon Linux 2 provides additional package installation through Amazon Linux Extras Repository ( amazon-linux-extras) ((client only)). Install PostgreSQL Client v10 $ sudo yum install -y postgresql10 Install RHEL 7 yum repo for PostgreSQL $ sudo yum install -y You may run into compatibility issues if you select old version of Amazon Linux (Amazon linux 1) for the below steps, otherwise it should work fine in the latest version Amazon Linux 2.Ĭheck Amazon Linux version $ cat /etc/system-releaseĪmazon Linux release 2.0 (2017.12) LTS Release Candidate When you’ve completed the import process, delete the dump file from its storage location if it’s no longer needed.Packages/Repos which is designed to work of RedHat will work on Amazon Linux also, Amazon Linux is a minimal-install version of RHEL. If you’re running Windows, you must use double-quotes. If you’re using a Unix-like operating system, make sure to use single quotes around the temporary S3 URL, because it can contain ampersands and other characters that confuse your shell. You must specify a database configuration variable to restore the database. Use the raw file URL in the pg:backups restore command: $ heroku pg:backups:restore '' DATABASE_URL -app example-appĭATABASE_URL represents the HEROKU_POSTGRESQL_COLOR_URL of the database you wish to restore to. Generate a signed URL using the AWS console: $ aws s3 presign s3://your-bucket-address/your-object While Heroku PGBackups can download any backup files that are directly accessible through a URL, we recommend using Amazon S3 with a signed url. Other backup formats result in restore errors. The pg:backups:restore command expects the provided backup to use the compressed custom format. If objects exist in a local copy of the database already, you can run into inconsistencies when doing a pg_restore. Load the dump into your local database using the pg_restore tool. Read more about the supported options in the PostgreSQL documentation. p is the port where the database listens to connections. U is the username (it will appear in the \l command) -h is the name of the machine where the server is running. Use any of the supported pg_dump options as needed, such as -schema or -table to create dumps of specific schemas or tables of your database. To connect your remote PostgreSQL instance from your local machine, use psqlat your operating system command line. If you need a partial backup of your Heroku Postgres database or a backup in a non-custom format, you can use pg_dump to create your backup.įor example, to create a plain-text dump from your Heroku Postgres database: $ pg_dump -Fp -no-acl -no-owner mydb.dump $ heroku pg:backups:download -app example-app To export the data from your Heroku Postgres database, create a backup and download it: $ heroku pg:backups:capture -app example-app

Capture and Download Backup with PGBackups For databases that are larger than 20 GB, see Capturing Logical Backups on Larger Databases. Contention for the I/O, memory, and CPU needed for backing up a larger database becomes prohibitive at a moderate load and the longer run time increases the chance of an error that ends your backup capture prematurely.

In general, PGBackups are intended for moderately loaded databases up to 20 GB.

As compared to the plain-text format, the custom format options result in backup files that can be much smaller. The resulting backup file uses the custom format option in pg_dump. PGBackups uses the native pg_dump PostgreSQL tool to create its backup files, making it trivial to export to other PostgreSQL installations. An alternative to using the dump and restore method of import/export if you have a Postgres instance on your local machine is to use the pg:push and pg:pull CLI commands to automate the process.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed